There are several tools that can help with the creation of test cases. So why don't we succeed in automating the Test Cases Design Process? In this short article I will explain that, if test collaborates with design, we can make huge progress on this topic!

4 Examples of Test Case Design

Lets imagine a business process that is documented by the design team on one page. Test will create the test cases. In the first example the description is done in plain text. In that case it is not possible to automate the test, and also it is not possible to use a formal test specification technique:

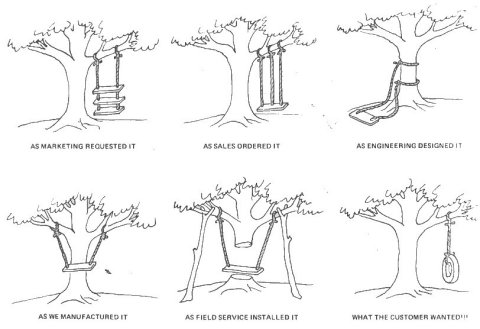

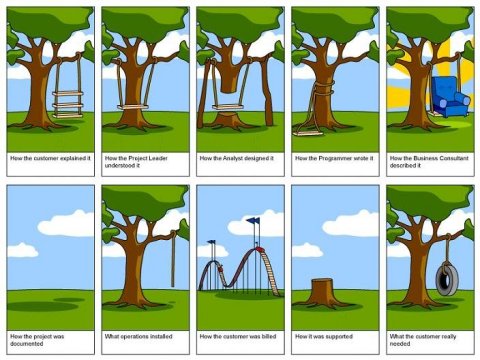

Example 1: Test Design from plain text: many interpretations and assumptions...

Creating test cases from plain text may look easy, but when you ask 5 test engineers to create the test cases you get 5 different sets without any insight in the quality of the coverage.

Choosing the process flow diagram technique for describing the business process, will result in less interpretations and assumptions, therefore in much better test cases:

Example 2: Manual Test Design: Process Cycle Test (TMap®)

The advantage of formal Test Case Design techniques like Process Cycle Test (PCT) is that it can be automated! How? The first step of deriving test cases with PCT, is to identify the paths and path combinations within the process flow diagram. Step 2: Instead of manually combining those path combinations to test cases, the path combinations (joined with a short description) can be inserted in a Test Design Tool. Step 3: The test cases are generated. Comparing with manual test case design: much faster; less knowledge is needed, but you need a tool* (license):

Example 3: Using a Test Design Tool: much Faster!

Most of the time, designers make these process flow diagrams in modeling tools (like MsVisio, Protos, Aris, BiZZdesigner,..). A common feature of the modeling tools is exporting the models in XML-format. Model Based Testing tools* do read XML! So, lets skip all manual steps and generate test cases directly from the process flow diagram:

Example 4: Model Based Test Case Design

Model Based Test Tools* can generate test cases within minutes. Of course, before using the test cases you must do a sanity check to confirm the tool understood the model correctly but nevertheless the time cant be beaten manually.

Collaborate!

Test Case Design can be automated (Im already working with these tooling on a daily basis).

But, besides getting the right tool(s), there is an important condition: the Test Base must have a minimum level of quality.

For instance: instead of describing business processes in plain text, they should be specified with the help of activity diagrams or process flow diagrams (and that is not (yet) common knowledge within the design processes). To get an automated Test Design process, lets join the forces: Together, Design and Test can make projects much faster and cheaper!

*) Example of a Test Design/Model Based Testing Tool I personally often use: STaaS/COVER (Sogeti Netherlands)

4 Examples of Test Case Design

Lets imagine a business process that is documented by the design team on one page. Test will create the test cases. In the first example the description is done in plain text. In that case it is not possible to automate the test, and also it is not possible to use a formal test specification technique:

Example 1: Test Design from plain text: many interpretations and assumptions...

Creating test cases from plain text may look easy, but when you ask 5 test engineers to create the test cases you get 5 different sets without any insight in the quality of the coverage.

Choosing the process flow diagram technique for describing the business process, will result in less interpretations and assumptions, therefore in much better test cases:

Example 2: Manual Test Design: Process Cycle Test (TMap®)

The advantage of formal Test Case Design techniques like Process Cycle Test (PCT) is that it can be automated! How? The first step of deriving test cases with PCT, is to identify the paths and path combinations within the process flow diagram. Step 2: Instead of manually combining those path combinations to test cases, the path combinations (joined with a short description) can be inserted in a Test Design Tool. Step 3: The test cases are generated. Comparing with manual test case design: much faster; less knowledge is needed, but you need a tool* (license):

Example 3: Using a Test Design Tool: much Faster!

Most of the time, designers make these process flow diagrams in modeling tools (like MsVisio, Protos, Aris, BiZZdesigner,..). A common feature of the modeling tools is exporting the models in XML-format. Model Based Testing tools* do read XML! So, lets skip all manual steps and generate test cases directly from the process flow diagram:

Example 4: Model Based Test Case Design

Model Based Test Tools* can generate test cases within minutes. Of course, before using the test cases you must do a sanity check to confirm the tool understood the model correctly but nevertheless the time cant be beaten manually.

Collaborate!

Test Case Design can be automated (Im already working with these tooling on a daily basis).

But, besides getting the right tool(s), there is an important condition: the Test Base must have a minimum level of quality.

For instance: instead of describing business processes in plain text, they should be specified with the help of activity diagrams or process flow diagrams (and that is not (yet) common knowledge within the design processes). To get an automated Test Design process, lets join the forces: Together, Design and Test can make projects much faster and cheaper!

*) Example of a Test Design/Model Based Testing Tool I personally often use: STaaS/COVER (Sogeti Netherlands)

( 3 / 1113 )

( 3 / 1113 )

Calendar

Calendar